Digital Elephant

Cache Speed-up

The effective access time for a system containing a cache and a slower memory is not a constant, because if a data item is already in the cache when the CPU asks for it, the access time is the cache access time, but if not, the access time is the slower memory access time plus any overhead needed to insert it into the cache and make it available to the CPU. There are three cases.

- Cache hit. The requested item is in the cache.

- Clean miss. The requested item is not in the cache, but the spot where it should have been is empty, or occupied by other data that has not been altered.

- Dirty miss. The requested item is not in the cache, and the spot where it should go is currently occupied by altered data. That data must be written back into the slower memory before the needed data can be inserted into the cache, and made available to the CPU

If we know or can approximate the probabilities of each of these three cases, we can calculate the effective (average) access time this way:

teff = tcache * (1 + pclean*(tclean/tcache – 1) + pdirty*(tdirty/tcache – 1) )

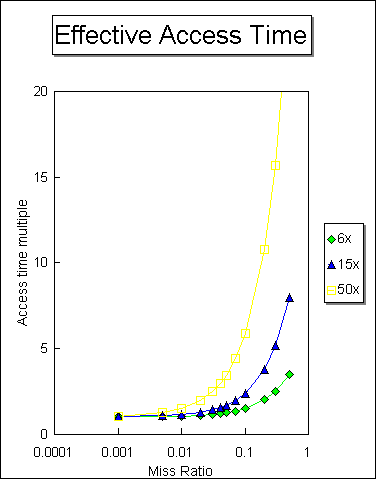

This equation tells us that the apparent access time of a cached memory system depends on three main parameters: the probability that a needed item will not be in the cache (the miss ratio), the cache access time, and the multiplier showing how much slower the slow backing memory is relative to the cache.

In addition, this equation provides guidance to designers of caches: to the extent possible, they should make the time for a dirty miss no greater than that for a clean miss, and they should minimize the delay added to a reference to make the data available to the CPU once the backing memory supplies it. This is often done by overlapping the outbound transfer of dirty data with the access to new data, so that the two times tclean and tdirty are the same. In this case, there is a single miss ratio, (the two miss probabilities add) and the equation is simpler:

teff = tcache * (1 + miss ratio * (tmiss/tcache – 1) )

This graph shows how the access time for a cached memory system varies as the miss ratio changes, and also as the speed ratio between the cache and the backing memory varies.